My Role

Usability Lead & Strategist

I partnered with the UI Designer and PM to de-risk a critical feature release, validating designs and restoring leadership trust

The Team

1 UI Designer (Primary Partner)

2 Product Managers (Managed cross-team alignment)

9 Developers (Provided technical feasibility & feedback)

The Punchline

The Trigger: Following a rocky release of "Schedule 2.0," leadership lost confidence in the design team's testing rigor. I stepped in to lead validation for the upcoming "Find A Time" feature to ensure it was de-risked before launch.

The Process Win: I pioneered a new recruitment strategy using in-app intercepts (Pendo), bypassing the traditional "Customer Success bottleneck." This reduced testing turnaround from months to 2 weeks and increased participant volume by 166%.

The Risk: My rigorous async testing revealed a critical failure in the proposed design: a 69% success rate on a feature used to book millions of appointments.

The Outcome: I guided the team away from the initial design without derailing the project. We improved average time-to-discovery from 138s to 18s and comprehension from 69% to 95%, successfully restoring leadership trust.

The Challenge: High Stakes & Broken Processes

The Business Context: Stabilizing the Core

Coconut Software's "Schedule" is the heartbeat of our platform - it’s how banking staff manage their day. We had recently released "Schedule 2.0," but despite new features, usability gaps had created "noise" and frustration among users.

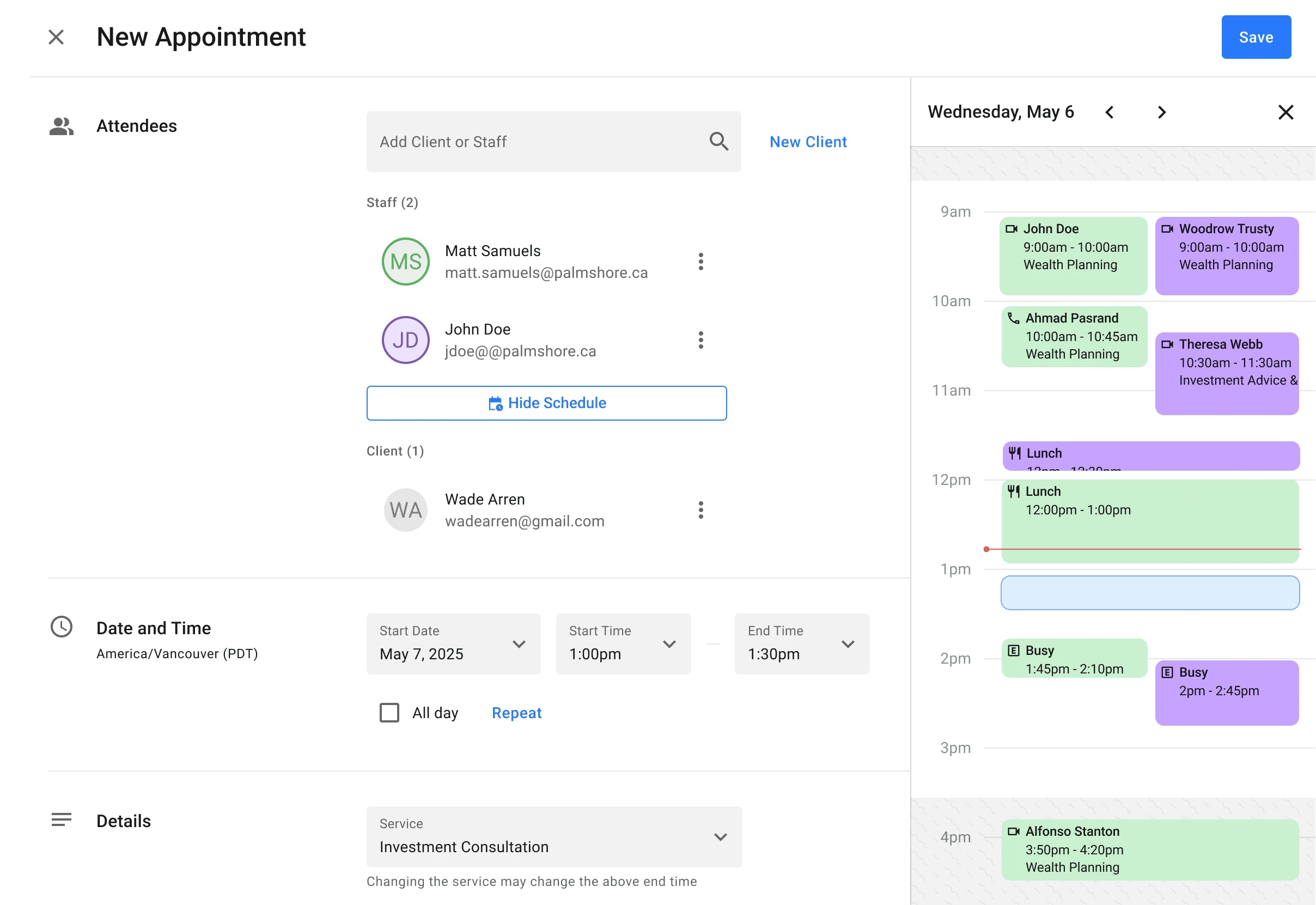

The Feature: "Find A Time" is the critical utility that allows staff to scan multiple calendars (their own and colleagues') to find a valid slot for a client.

The Risk: A failure here isn't just a UI annoyance; it leads to double bookings. This forces bank staff to apologize to customers and reschedule, damaging their credibility and our brand.

The Urgency: With leadership's trust in our testing rigor shaken, I had to ensure this feature was bulletproof. We could not rely on onboarding "crutches" to explain a bad interface; the utility had to be intuitive.

The Operational Pain: The Recruitment Bottleneck

While the mandate was to "test rigorously," the existing process made this nearly impossible within our timeline.

The Bottleneck: Historically, recruiting users took months because we relied on Customer Success Managers (CSMs) to manually email stakeholders on our behalf.

The Gap: This slow turnaround meant testing was often skipped or rushed. To meet our 1.5-month timeline, I couldn't just run a test; I had to invent a faster way to find the right users.

The Approach: Operational Innovation & Strategic Validation

My First Step: Solving the "Who" before the "What"

My immediate obstacle was the timeline. We had 6 weeks to run rigorous iterative testing.

The Innovation: I pioneered a new recruitment strategy using Pendo (in-app intercepts). Instead of relying on CSMs for outreach, we segmented users based on their live usage of the scheduling feature.

The Result: This allowed us to target active users directly. We reduced the feedback loop from months to 2 weeks and increased participation by 166% (from ~15 to 40 users per round), giving us statistically significant data for the first time.

Assembling the "Whole-Brain" Team

I positioned myself not just as a tester, but as a strategic partner to the UI Designer.

The Dynamic: The UI Designer, PM, and Developers were confident in the initial design. Internally, even I felt it was a "step up" from our legacy tools.

My Role: Despite our collective confidence, I enforced a "gut check." My role was to prove whether that confidence was supported by data before we committed to code.

The Validation Strategy

The Hypothesis: "The new UI is cleaner, so users will naturally understand how to find a slot."

The Strategy: I set up asynchronous unmoderated testing focused on two high-risk metrics: Time to Discover and Error Recovery. I knew that if users couldn't find the feature or fix a conflict, the visual polish didn't matter.

The Work: Aligning with Mental Models

The "Blind Spot" Discovery

The first round of async testing was a wake-up call for all of us. We realized our internal bias had blinded us to a core usability issue.

The Mental Model Gap: As a "Google Shop," we were used to the term "Find a Time." However, our banking users primarily live in Microsoft Outlook. The button label didn't ring a bell for them; it was effectively invisible terminology.

The Proximity Issue: We had originally placed the button below the fold near the time selector. Users simply didn't see it because it was disconnected from the people they were trying to book.

The original "Find a Time" button buried below the fold.

The new "View Their Schedules" button, co-located with the Staff Selector.

The Pivot: Renaming and Relocating

We fixed discoverability with two high-impact, low-effort changes:

Renaming: We changed the label to "View Their Schedules", which aligned with the action users were actually trying to perform rather than abstract Google terminology.

Co-location: We moved the button from the time selector to the Staff Selector. This created a logical flow: Select Staff → View Their Schedule.

Solving for Comprehension (Without Scope Creep)

The second failure point was conflict resolution. Users saw an error but couldn't figure out who was busy.

The Problem: The original warning ("John Doe is unavailable") was generic and located far from the visual schedule. Users couldn't correlate the text to the visual gap.

The "Pragmatic" Fix: We couldn't overhaul the entire schedule grid without delaying the release. Instead, we improved the "signage" around it:

Explicit Messaging: We changed generic errors to specific feedback: "John Doe is not available in this location at the time selected."

Visual Indicators: We added a prominent visual alert near the schedule grid and status icons next to staff names. This allowed users to instantly scan the list and see the "red flag" next to the person causing the conflict.

We improved error comprehension not by rebuilding the grid, but by adding clear status indicators next to staff names, allowing users to identify conflicts in seconds.

The Outcome: Metrics & Trust

The Metrics: Validated Success

By shifting our strategy from "intuition" to "rigorous validation," we turned a high-risk feature into a massive win before a single line of code was shipped to production.

Discoverability: We reduced the average Time to Discover from 138 seconds to 18 seconds - a massive improvement in finding the feature.

Comprehension: We improved the success rate for identifying and resolving conflicts from 69% to 95%.

Operational Velocity: The new Pendo-based recruitment process is now a standard asset. We reduced the feedback loop from months to 2 weeks and increased participant volume by 166% (from ~15 to 40 per round).

The Team Impact: Restoring Confidence

Beyond the metrics, the biggest win was cultural.

Restored Trust: Leadership's confidence in the design team was restored. We proved we could catch failures before release.

Engineering Gratitude: The Product Manager and Engineering team specifically highlighted the value of the "low-scope" solution. By solving the usability gaps through clear signage and labeling rather than a complex grid overhaul, we kept the release on

What I Learned: A Masterclass in Pragmatic Leadership

1. Operations Enable Design: This project reinforced that if the process is broken (e.g., recruitment takes months), the design will suffer. Stepping up to fix the Ops bottleneck was just as important as fixing the UI.

2. Mental Models > Internal Jargon: We fell into the trap of designing for ourselves ("Google Shop") rather than our users ("Outlook Users"). It was a stark reminder that internal consensus does not equal user clarity.

3. Validation is a Risk Mitigation Tool: Testing is often viewed as a "slow-down," but here, it was an accelerator. By catching the 69% failure rate early, we avoided a disastrous release that would have required weeks of hotfixes and apologies to banking clients.